![]() If you still regard games consoles as child’s play and their sound requirements as trivial, it is time to think again. And if you are involved in the composing, recording or postproduction markets, it is past time to think again.

If you still regard games consoles as child’s play and their sound requirements as trivial, it is time to think again. And if you are involved in the composing, recording or postproduction markets, it is past time to think again.

Games are not only growing in their sound sophistication, they represent a growing and challenging opportunity, where new frontiers are being pioneered.

Consider this: console games set-ups often share home cinema real estate – an HD screen along with surround-sound and subs. And they use it. Soundscapes, dialogue, Foley M&E are all prime games territory, and offer rich pasture for audio.All of this was ably demonstrated at the recent Develop conference – one of two scheduled for the UK this year that sit alongside GameSoundCon in LA and San Francisco in demonstrating the growing importance and ingenuity of the sound side of games. More than this, they point to possibilities beyond our present understanding of postproduction, and the opportunity to evolve new ideas that cinema is too mature to embrace.

Games sound is a mixed up, cut-up, dynamic, manic world. But it’s also a place where less is frequently more, and making more out of less is a survival code.

Disk space and processing are limited resources that are the currency of sight, sound and system. Gameplay regularly runs into many hours but must be served by only minutes of recorded music that must share its storage and processing footprint with dialogue, Foley and FX. And clumsy repetition spells certain death.

And it’s not all flat out, maxed out madness. There’s an awful lot of method here. Games sound has its roots in the well-charted world of audio for film, calling on the established skills and talent pool of music composers, Foley artists, sound designers, effects designers, mixers and studios. It takes games into uncharted territory, and it’s attracting some serious sound players away from film and TV.

So let’s leave behind linear preconceptions of scoring, recording and delivery... and see where the game takes us.

Game on...

Despite having their roots in the Atari ST and Midi sequencers from the likes of Steinberg, Hybrid Arts and C-Lab, block recording and cut-and-paste editing are the blueprint for meeting the high demands and limited resources of creating games sound.

This was beautifully demonstrated at Develop by film and TV music composer Jason Graves, with an insight into two of his games assignments.

This was beautifully demonstrated at Develop by film and TV music composer Jason Graves, with an insight into two of his games assignments.

For a while, Graves had an enviable place in Hollywood’s sound scene studying under the likes of Jerry Goldsmith and Elmer Bernstein, but his love of music and dissatisfaction with the Hollywood way of working sent him home to North Carolina. Here, his ability to trade in everything from electronica and rock to symphonic scores made him a natural asset to the games world – giving him a new level to his career.

As well as being dubbed ‘the scariest game ever made’, Dead Space is Electronic Arts’ best-selling title and has won two BAFTA awards – a big slice of which is attributed to its sound and sound management.

Graves’ work for Dead Space characteristically involved composing and recording six stereo orchestral music tracks that are then dynamically looped and layered within the game in such a way as to provide a wealth of musical options that can be used as the game unfolds. The many possible and non-conflicting combinations of these tracks give the game a soundtrack that can hold its own against a major cinema score. Reinforcing the flexibility inherent in its composition and recording, the music’s apparent diversity is extended by ‘fear emitters’ (player proximity detectors) that modify the soundtrack as characters approach certain points in the game map – these are able to mimic the use of dramatic music in deterministic filmmaking in the nondeterministic world of gameplay.

‘It is pretty straightforward for linear media where the composer/sound designer knows exactly what is going to happen – when and how long, exactly, a build-up should take, and when to play the big “boo!” stinger,’ says Dead Space audio director Don Veca, in an enlightening interview on the Original Sound Version website. ‘This is not the case for interactive media; the video game player determines how the scene plays out. Fear emitters are simply a “sphere of influence”; however, with this one tool, we can affect a myriad of audio sources, such as music, streamed ambience, adaptive ambience, reverb control, general mixing parameters or whatever.’

Musically contrasting but conceptually similar, Graves’ second example was a taster of a forthcoming game, using a larger set of loops to create an entirely different atmosphere. Keeping to a strict 160bpm and the key of D (with a little modulation through E and A), again allows him to provide complex and varied music from relatively little recorded sound. This time, the weapons of engagement are drawn from the rock and electronic locker.

Musically contrasting but conceptually similar, Graves’ second example was a taster of a forthcoming game, using a larger set of loops to create an entirely different atmosphere. Keeping to a strict 160bpm and the key of D (with a little modulation through E and A), again allows him to provide complex and varied music from relatively little recorded sound. This time, the weapons of engagement are drawn from the rock and electronic locker.

And all designed, composed, recorded and produced to a standard that Hollywood cannot better.

Film scoring, dance remixes, aleatoric (chance) composition, cut-ups, serial composition, beat matching, looping and other musical chops all have a bearing here. There is no escaping the fact that this is a radically new approach to the art of composing a musical score.

Other Develop speakers – including Mark Estdale on vocal characterisation, Kristofor Mellroth and Mark Yeend from Microsoft Game Studios Central Audio, Rockstar’s Alastair MacGregor and Naughty Dog/Sony PlayStation’s Phil Kovats – brought further insight into the games world and its relationship to better established music and audio working. Between them, they have a list of credits that puts them at the leading edge of their field. If games were once a poor relation to film and TV, they have grown into something richer and are draining talent from movie music and audio.

The shape of things to come

Where the mixing console embodies the power and sophistication of a movie post suite, games sound mixers are an altogether less impressive sight. In place of displays, lights and fields of controls, are spreadsheets and lines of code that recall Basic programming on early home PCs. But they are every bit as powerful as their flashy counterparts.

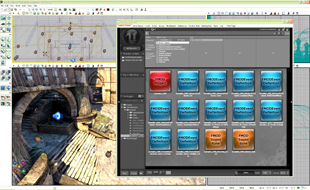

And with the development of middleware (software that sits between the game platform and game designers) such as Audiokinetic's Wwise and Firelight Technologies’ FMOD, this too is changing. ‘We’re trying to take a more pro audio approach,’ says Firelight’s Brett Patterson. ‘Instead of having something that looks like a spreadsheet, we want something that’s recognisable to any audio person.’

Looking more like DAW screens, middleware mixers have capabilities beyond those of a postproduction desk, reflecting games’ demands for functions that lie beyond the remit of post…

Looking more like DAW screens, middleware mixers have capabilities beyond those of a postproduction desk, reflecting games’ demands for functions that lie beyond the remit of post…

Everything in games is interactive and needs to be available at a moment’s notice for real-time delivery. This begins with the mix of dialogue, Foley, effects, music and stingers before calling on the extensive use of filters and reverbs that enable sounds to be placed at distance and in environments. And everything has to respond to the gameplay. ‘What was old is new again,’ Phil Kovats asserts. ‘A linear approach doesn’t work any more – we have to be able to place something in a virtual world and make it sound as if it’s part of that world. Sound touches the whole game, every single part. No other games artist does that.’

Working beyond the limits and limitations of cinema’s determinism offers yet greater opportunities, however. Listening to sound designer and movie producer Alex Joseph’s foray into the psychological aspects of sound design, it is clear that the language of film sound is now well set. And while it uses some clever techniques to manipulate audience expectations and emotions, there are many unexplored possibilities that movies are simply too mature to address.

He is well placed to know, having studied sound and psychology before going on to work on a huge catalogue of movies including Vantage Point, several Bond films, the Harry Potter series and a number of Tim Burton films. He maintains that while film sound commands a set of expectations that help define it, games are still defining their rules. It is a new frontier…

In the real world, the speed of sound is easily bettered by the speed of light – and also by the muzzle velocity of most firearms. As a result, we are quite used to seeing lightning before hearing the accompanying thunder. But we could also be hit by a bullet before hearing the gun that fired the shot.

In film world, we have come to accept that the sound and impact of bullets are time aligned. In games world, however, we are not yet limited by such conventions – nor have we begun to explore the entertainment opportunities or psychological possibilities that accompany alternative rules.

Anybody else hungry for a new audio frontier?